The big market opportunity for AI pathology companies, though, lies not in the research setting. It’s in the standard diagnostic workups used to determine the nature of every cancer patient’s tumor—and to guide treatment options. There’s just one obstacle to seizing that market: The entire infrastructure of pathology has to change. “You can only use these algorithms if your slides are digitized before your pathologist goes to look at them for diagnosis, and there are not a lot of places that do that,” says Jeroen van der Laak, a computational pathologist from the Radboud University Medical Center, in the Netherlands.

Although many pathology labs now make digital copies of glass slides for archival purposes or after-the-fact research projects, there are only a few early adopters, mostly in Europe, that scan them up front for diagnosis. Hospitals have been slow to incorporate automated whole-slide imaging because the technology is expensive: upwards of $250,000 for the scanner, plus the additional cost of storing gigapixel-size image files.

The investment is worth it, insists Anil Parwani, head of digital pathology at the Ohio State University Comprehensive Cancer Center, one of the only sites in the United States where pathologists now digitally scan slides as part of their routine diagnostic workflow. Parwani says his hospital’s fully digital platform will pay for itself within five years, thanks to improvements in doctor productivity and reductions in diagnostic errors. Digitizing slides also allows for online file sharing, rather than shipping physical slides for remote diagnosis or second opinions. Plus, “it’s made the workflow more robust,” Parwani says, as pathologists can instantly compare biopsies taken months apart or review cases on the go.

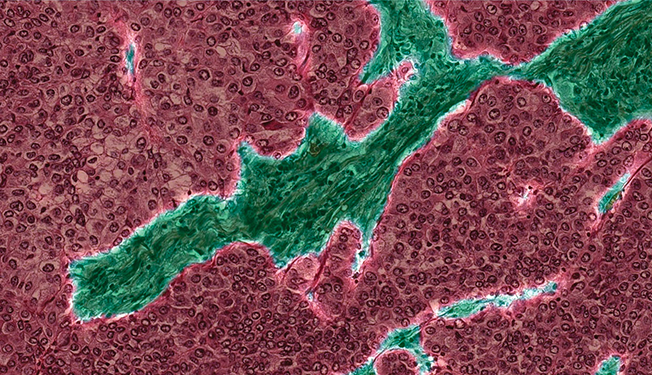

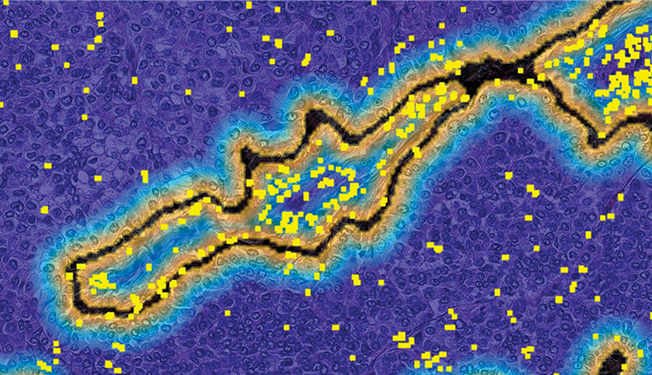

To help doctors plan an attack on a lung tumor, PathAI’s software maps the types of tissue present [top], showing in red the epithelial cells that are key to cancer’s progression. It also makes a map of immune cells [bottom], shown as yellow squares, to determine whether new immunotherapy drugs might be effective against the tumor.

If digital-slide scanning is paired with powerful quantification algorithms, the added value should become obvious, says David West, founder and CEO of Proscia, a digital pathology startup in Baltimore. “This will likely become the standard much more quickly than people expect,” he says. And when it does, “the role of the pathologist is certainly going to change. The best pathologists are going to become informaticians, and the best pathology labs are going to be informatics driven.”

“There’s definitely a disruptive nature to this,” West adds.

Beck, of PathAI, started down the road to disruption as a medical student at Brown University in the 2000s, when he began dabbling in quantitative image analysis. Working with pathologist Murray Resnick, Beck helped develop a computer program that evaluated the size, shape, and other features of esophageal cells to determine a patient’s risk of cancer. It wasn’t a deep-learning algorithm, but his interest in quantified medicine propelled him to Stanford, where he followed his pathology residency with a Ph.D. in the laboratory of AI scientist Daphne Koller. That research culminated in the development of the Computational Pathologist, or C-Path, system, a fairly primitive machine-learning tool for grading the severity of breast tumors. In 2011, the group published its findings, demonstrating one of the first applications of AI in pathology.

At the time, says Koller, “no one was taking this very broad, data-driven approach to this problem.” In previous attempts to automate tissue analysis, researchers had generally told their programs what features to look for—as Beck and Resnick had done five years earlier in their study of esophageal cancer. With C-Path, Beck fed his algorithm hundreds of features, practically every one he could think of and measure. He let the computer code take care of the rest.

AI is sometimes criticized for being a “black box.” Because deep-learning programs like C-Path effectively teach themselves how to interpret images, it’s impossible to know exactly how these algorithms arrive at their final decisions. Yet, “just because it’s a black box doesn’t mean you can’t get very useful ideas from it,” says Matt van de Rijn, a pathologist at Stanford and one of Beck’s mentors. Thanks to C-Path, for example, Beck discovered that the most predictive features for breast cancer survival were not in the tumor cells themselves, but rather in the surrounding region, where few human pathologists thought to look. “That was an amazing finding that could very well lead to new interpretations in pathology,” Van de Rijn says.

After Stanford, Beck moved back east to start his own research group at the Beth Israel Deaconess Medical Center, an affiliate of Harvard Medical School, where he stepped back from machine-learning algorithms and focused largely on cancer epidemiology. Then, in 2015, an international competition launched by Dutch researchers pulled him back into the disruptive world of AI.

Radboud University’s Van der Laak spearheaded the contest, which challenged machine-learning specialists to find new techniques for early detection of breast cancer. In particular, Van der Laak asked researchers to find invasive breast cancer lurking inside lymph nodes, a determination that’s essential to plotting the correct course of treatment. “It’s a task that every pathologist hates because it’s a lot of work and it’s not really intelligent work,” he says. If an algorithm could do the task as well as or better than a human, Van der Laak figured, it would show doctors that AI was an asset—something that could free overstretched pathologists to focus on more complex tasks—and not something to be feared as a job killer.

The Expert’s View: After training as a pathologist and an AI scientist, Andrew Beck became convinced that machine-learning tools could revolutionize the practice of pathology.

The Cancer Metastases in Lymph Nodes Challenge (Camelyon) did not have the cachet or financial payout of an Ansari X Prize or DARPA Grand Challenge. But people involved say it spurred innovation in computational pathology just as those better-known contests helped jump-start industries for private spaceflight and autonomous cars. “Everyone was driving each other to get better,” Beck says, “because everyone wanted to win.”

Beck’s team came up with a simple method that yielded big results. They devised a two-step verification system to ensure all patches of tissue initially labeled “clean” by the AI were indeed cancer-free. The resulting algorithm proved as good as and sometimes better than an expert pathologist at determining whether slides contained tumor cells, and also at determining where the cancerous masses sat within the larger tissue sample. Beck’s team ultimately beat out 22 other groups to come out atop the Camelyon leaderboard.

Besides bragging rights (and a gold-colored 1-terabyte external hard drive presented as a prize at the 2016 IEEE International Symposium on Biomedical Imaging, in Prague), Beck says that the victory also gave him the confidence to venture out on his own. In January 2017, he resigned from his tenure-track position at Harvard and devoted himself to PathAI.

The company is now working on three types of decision-support tools. First, PathAI is developing algorithms to take on pathologists’ most loathed and repetitive chores, such as identifying metastases in lymph nodes (the application in the Camelyon challenge) and other simple determinations of whether cancer cells are present. These aren’t difficult tasks, Beck says, but they’re time consuming and not considered the best use of human expertise. PathAI is currently partnered with Philips, the Dutch health-technology giant, on one such image-analysis system for automatically detecting cancerous lesions in breast tissue.

The second application involves determining the “grade” of cancer. Jonathan Epstein, a pathologist at Johns Hopkins University, in Baltimore, describes this decision about the aggressiveness of a tumor as “difficult, subjective, and one of the most critical aspects of treatment.” Epstein, an advisor to PathAI and an expert on urological cancers, is working with the company to train its algorithms to diagnose tumors of the prostate and other organs.

Lastly, the company is further developing biomarker detection tools like the one that pharmaceutical companies are using to understand who can benefit from their drugs. If validated in clinical trials, those same algorithms could help doctors personalize drug choices for all patients.

To date, PathAI has tested its software on cancers of the lung, bladder, skin, prostate, breast, colon, and stomach. “The platform is very transferable, and that’s why we’ve been able to work on pretty much all major solid tumors,” says Beck, adding, “The process gets faster and better with every new project and every new indication.”

As with any new technology, there’s a risk of overselling what machine learning can do for the field, but University of Michigan pathologist David McClintock insists that pilot studies have shown that the promise is real. “When appropriately deployed, machine-learning tools can be of assistance,” he says. “I don’t think that’s hype. That’s a fact.” The biggest obstacles to AI-powered improvements in patient care may be getting regulators to approve these new tools and getting doctors to use them.

But as the technology matures, one big question looms: Could AI go beyond assistance and eventually replace human pathologists entirely? Beck dismisses the possibility out of hand. “It’s just this cliché that people can’t get out of their heads,” he says. Machine learning may help with specific diagnostic tasks, he says, but finding the best treatment for a sick patient requires synthesizing many types of clinical information, including cell stains, protein annotations, genetic profiles, and electronic health records. Careful judgment is required to put all the information together and come to a definitive diagnosis and treatment plan. That synthesis is where human pathologists show their worth, Beck says: “AI is not going to figure that out by itself.”

By Elie Dolgin

This article appears in the December 2018 print issue as “The AI Medical Revolution Starts Here.”